Greetings. My name is Hongbo Ma (马洪博) and I am currently a senior student at Tsinghua University, majoring in Computer Science and Technology in the respective department under Xinya College. With an additional minor in Economics and Management under the School of Economics and Management, I am nearing the completion of my program and am ready to pursue further deeper research interests in my field.

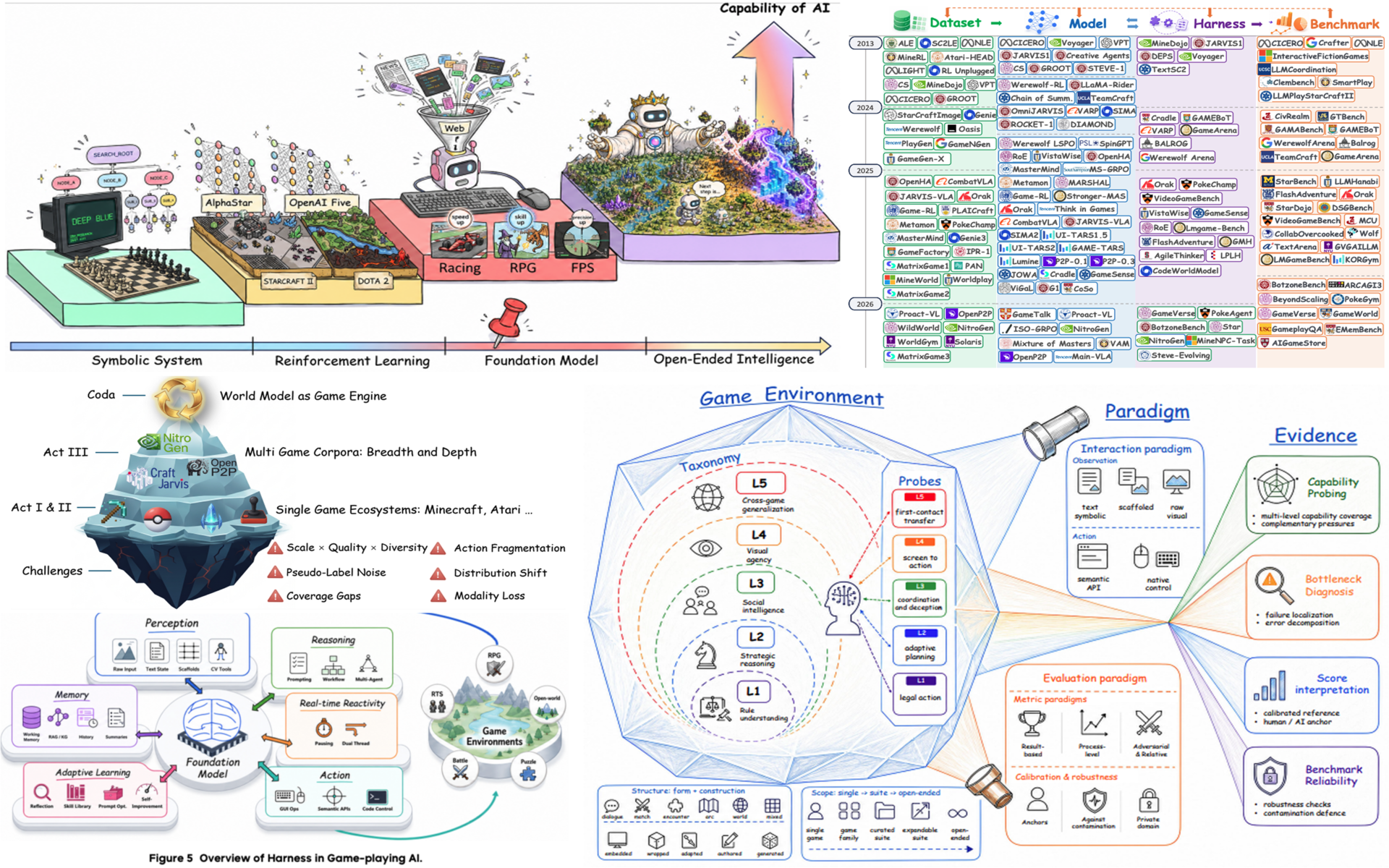

Specifically, my research emphasizes the use of generative models and multi-agent system that utilize the concepts and framework of world models. My main objective is to develop embodied agents that are able to perceive, reason and act in their appropriate environments in the real world. I explore ways to solve complex problems, using agents to analyze context and subsequently monitor desired output.

For more detailed information, please review my CV and current publications below.

Current methodology focus

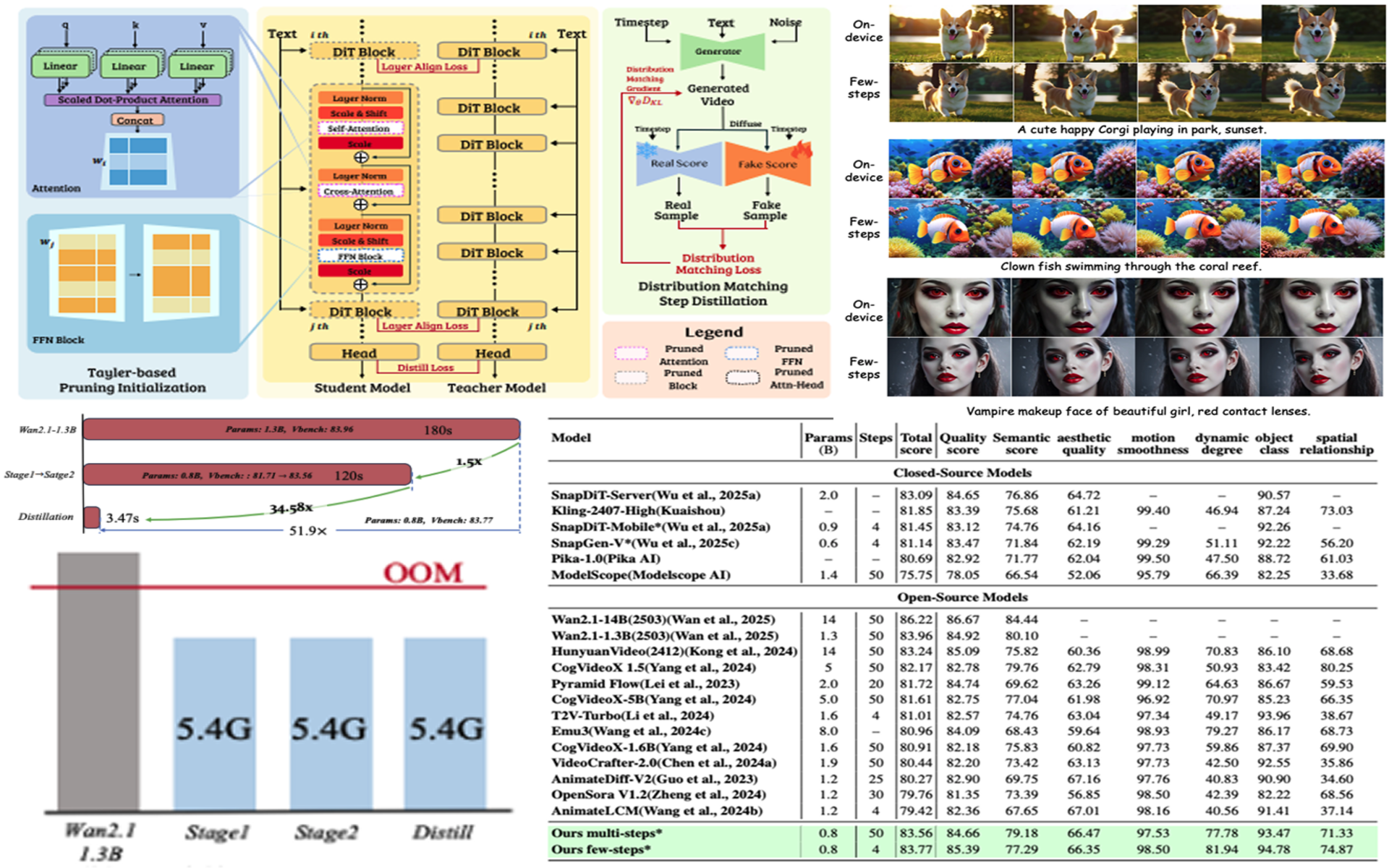

- Visual representation learning and generative modeling

- World and environment modeling

- Efficient reasoning and decision-making for multi-agent system

- Training and generalization in real-world environments

Core Theme

World Models

A unified research loop from Real2Sim to Sim2Sim, and then back to robust and efficient real-world deployment (Sim2Real).

Part I: World Modeling (Real2Sim)

- Visual representation learning and generative modeling

- World and environment modeling for structured simulation spaces

- Building high-fidelity, controllable simulators from real observations

Part II: Multi-Agent System (Sim2Sim)

- Perceiving: learn grounded state understanding in simulated worlds

- Decision-making: reason and plan with efficient policy mechanisms

- Acting: optimize agent behaviors through closed-loop interaction

Part III: Real Deployment (Sim2Real)

- Efficient reasoning and execution under real-world constraints

- Training and generalization in dynamic real environments

- Deployment-centric adaptation loop for reliable long-horizon behavior

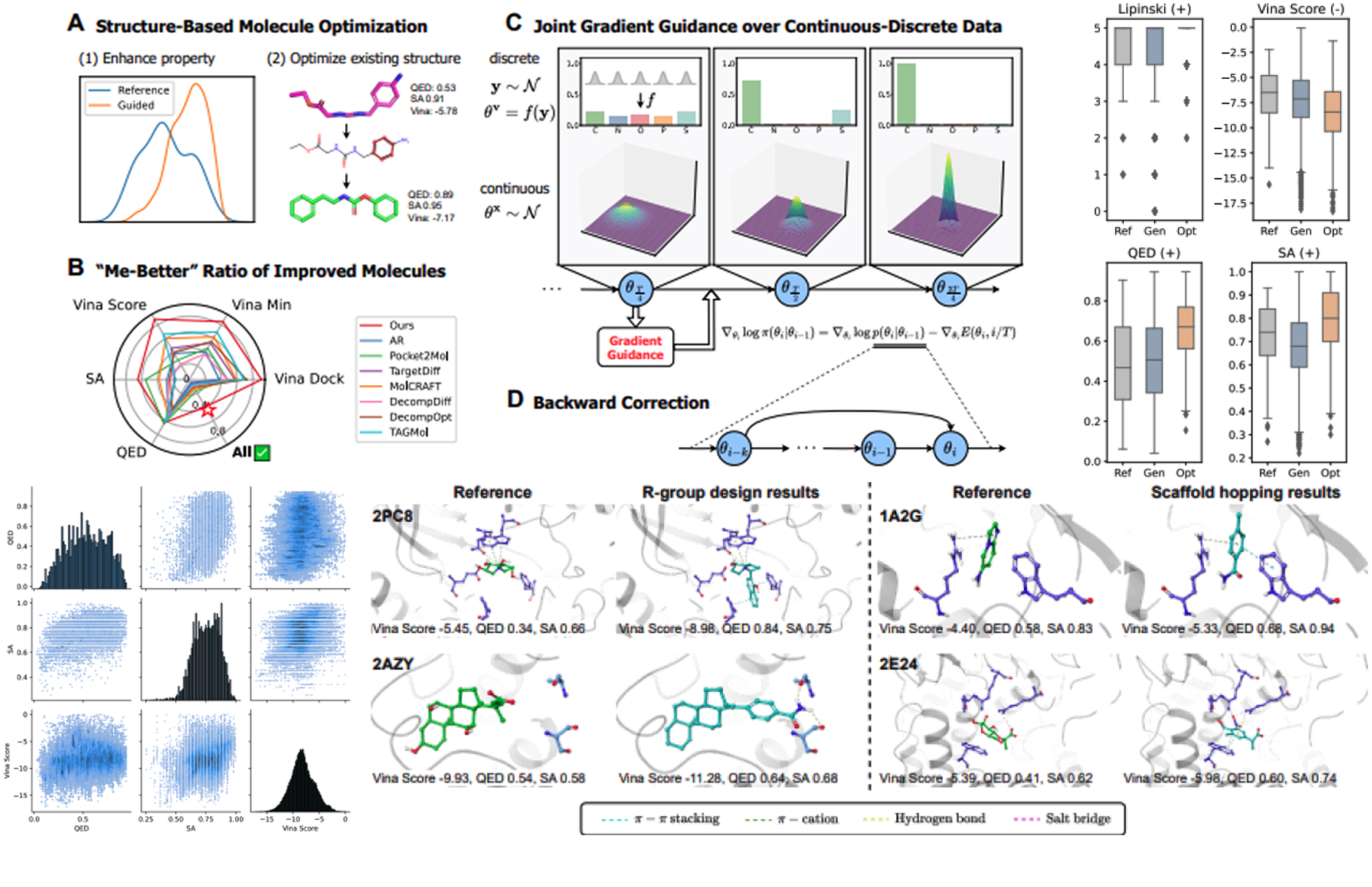

Extension: Scientific Agents

Based on a world model research route, we first abstract real scientific environments into controllable modeled spaces, solve tasks such as molecular design, and experiment planning in the abstracted environments, and then feed validated strategies and hypotheses back into real-world settings.

News

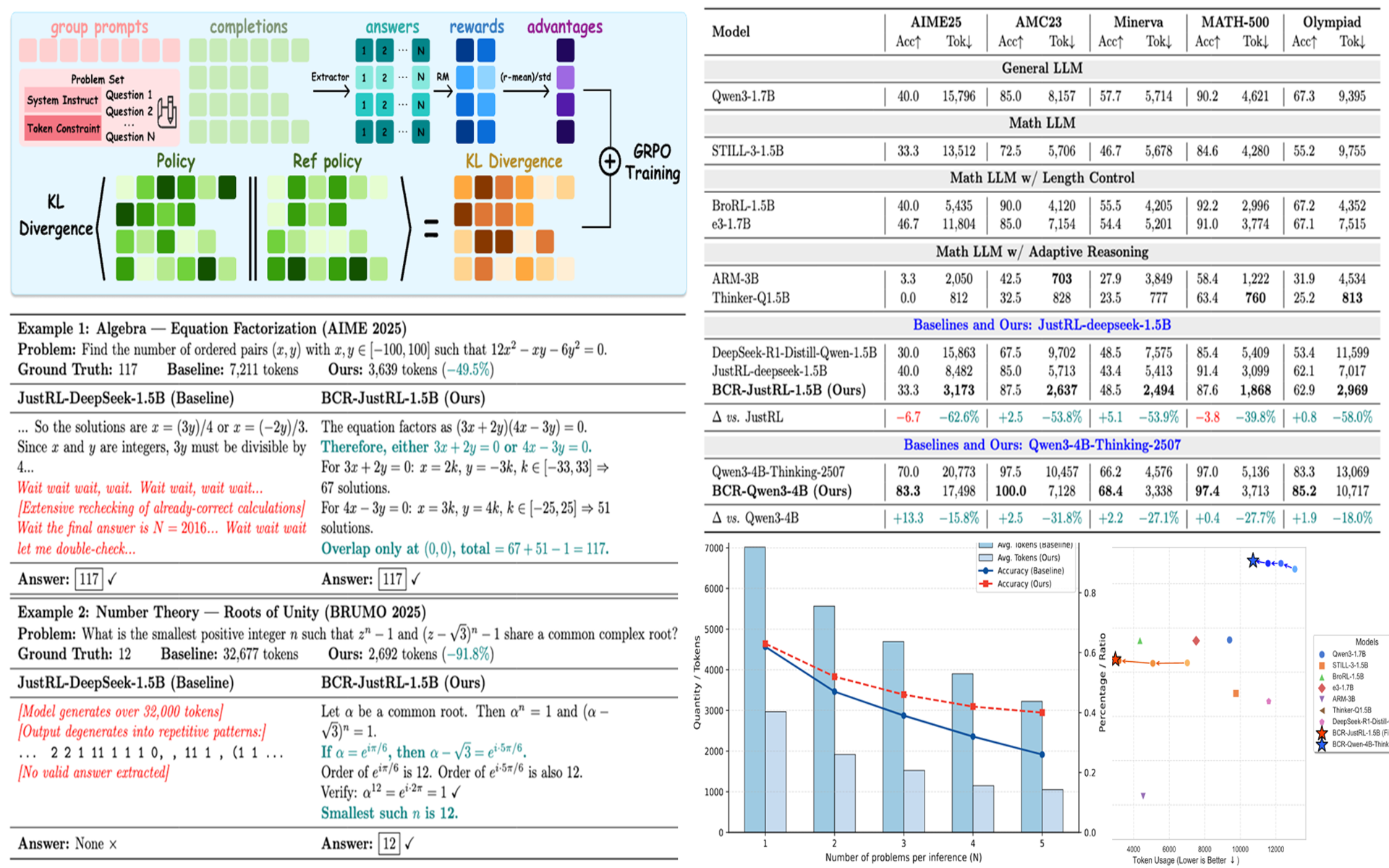

- 2026.5: BCR is accepted by ICML 2026! 🎉🎉🎉

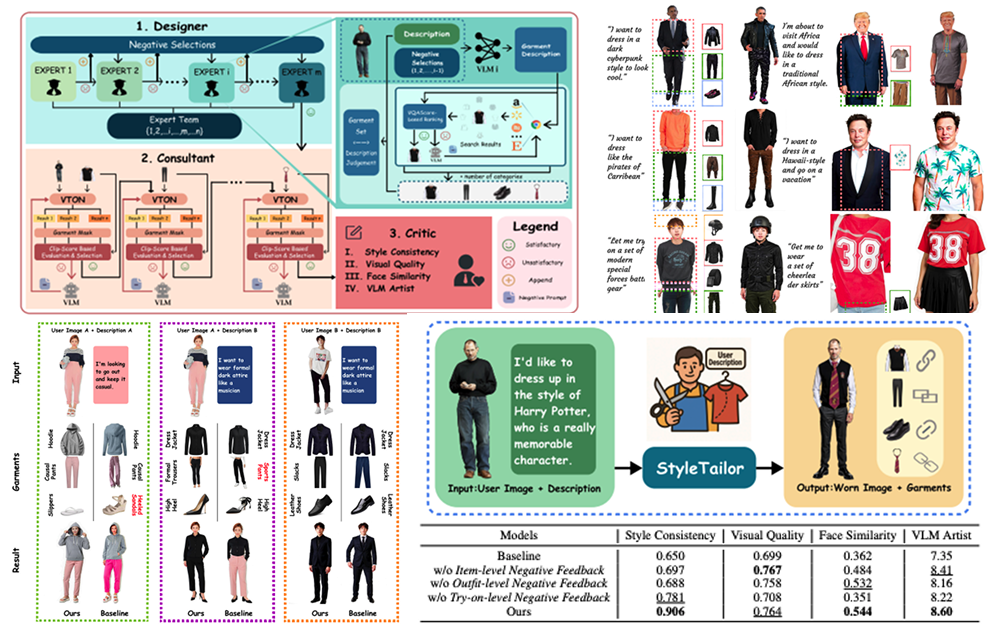

- 2025.11: StyleTailor is accepted by AAAI 2026 and selected as Oral (Top 4.5%)! 🎉🎉🎉

- 2025.5: One paper is accepted by ICML 2025! 🎉

- 2025.1: One paper is accepted by ICLR 2025! 🎉

Publications

Auditing Generated Skill Libraries for Embodied Language Agents

From Melee to Consensus: Adaptive Moderated Debate for Inference-Time LLM Reasoning

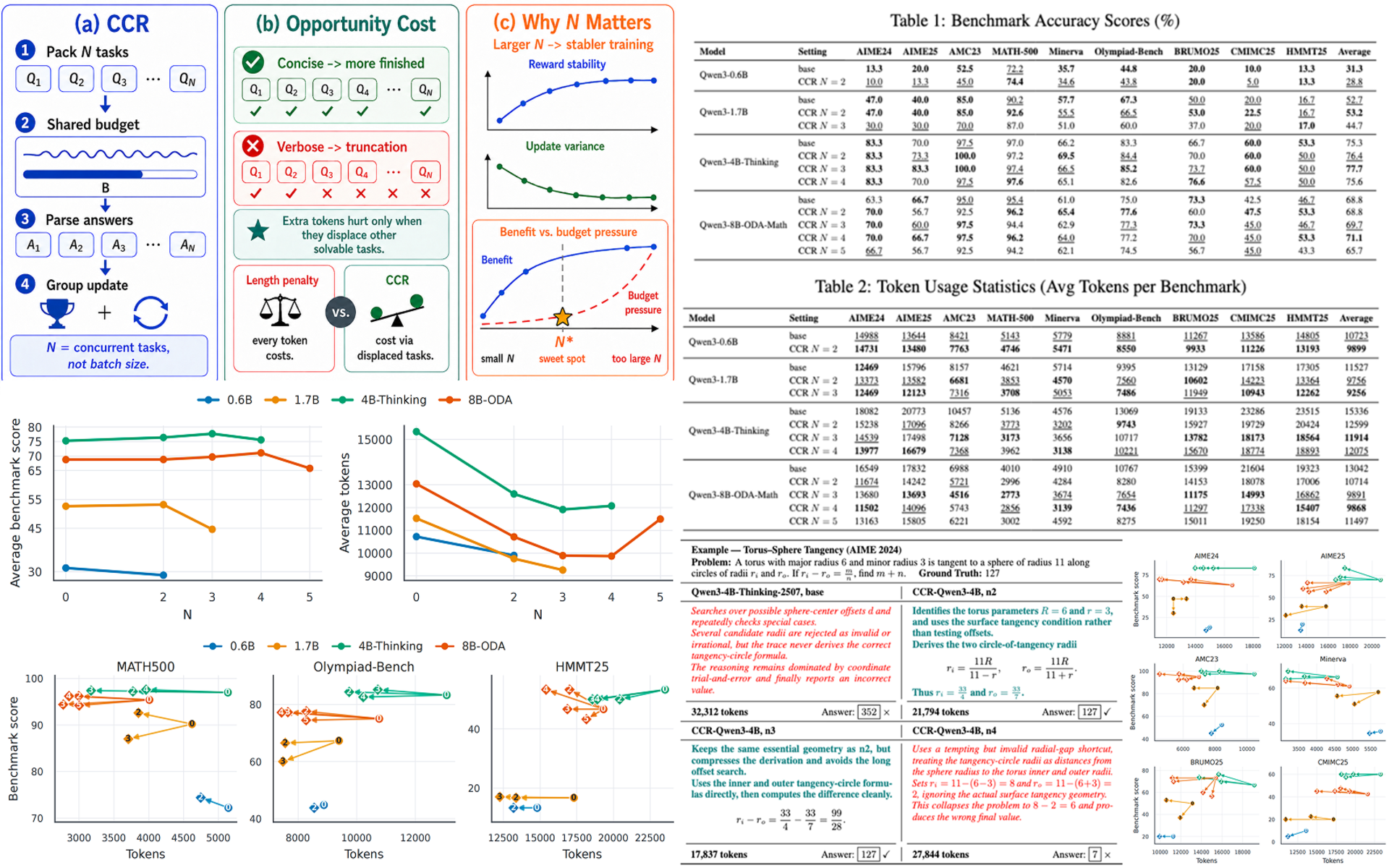

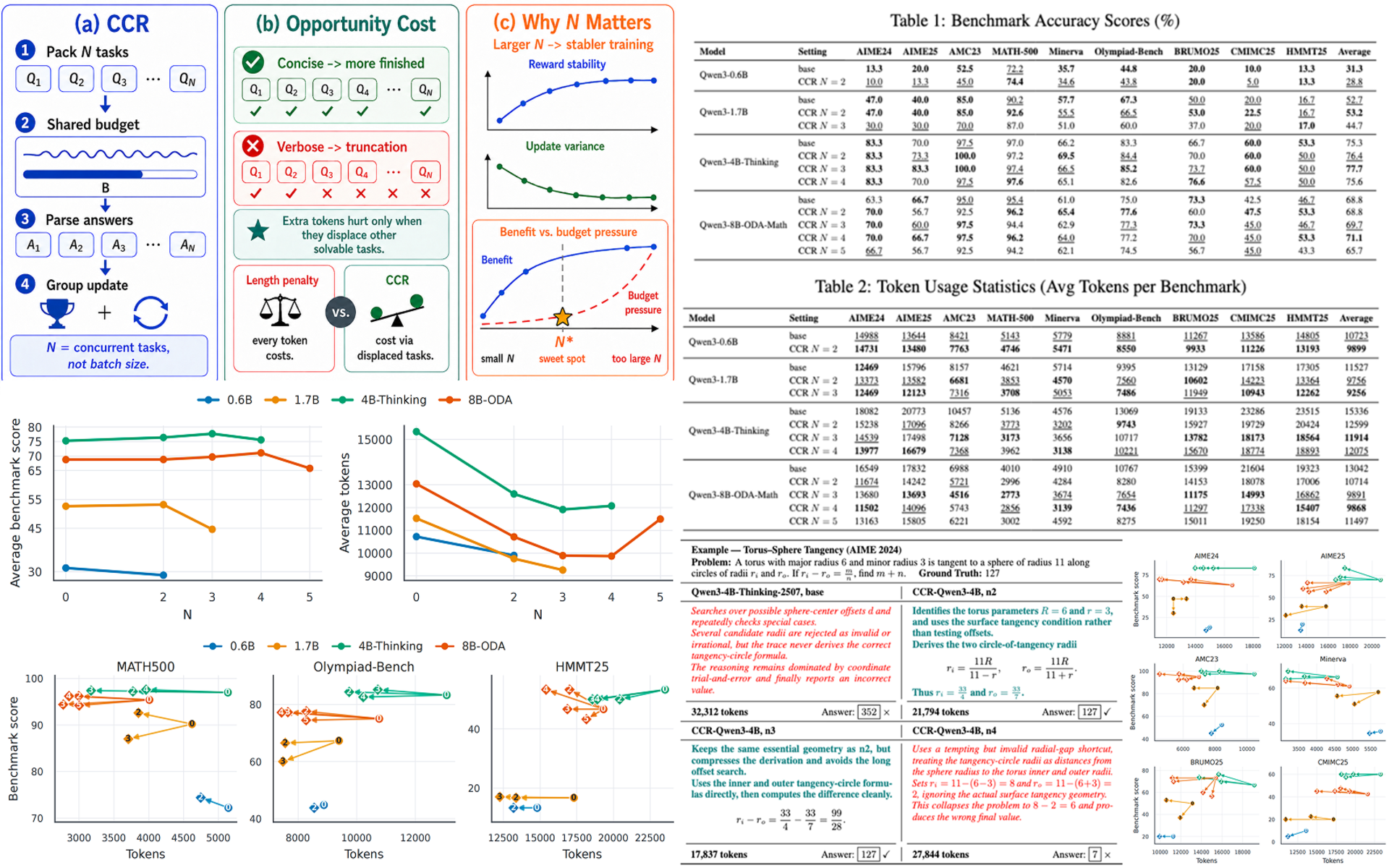

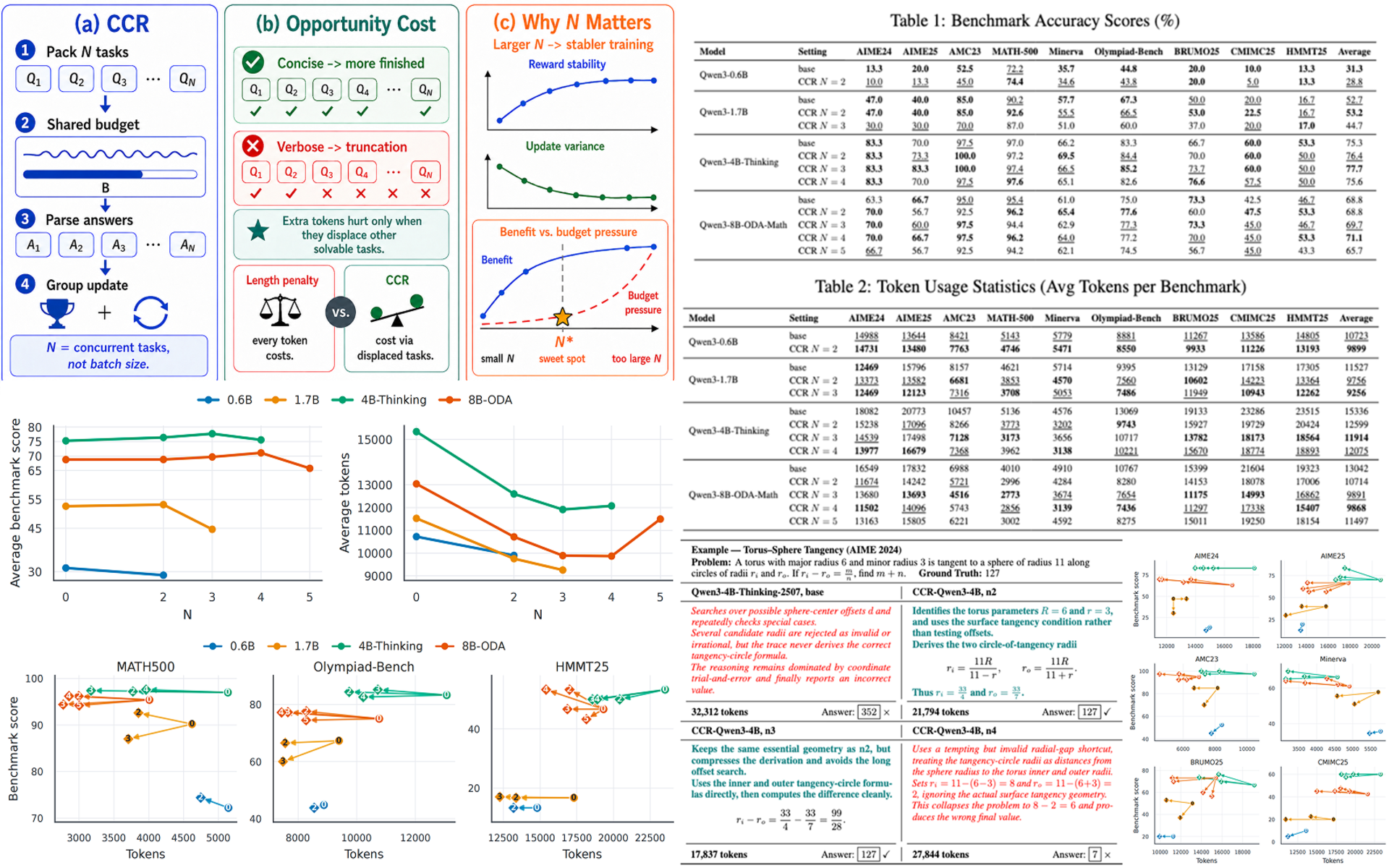

Concurrent Constraint Reinforcement

Batched Contextual Reinforcement

</div>

Educations

- 2023.09 - Present: B.S. in Economics and Management (Minor), Tsinghua University

- 2022.09 - Present: B.S. in Computer Science and Technology (Major), Tsinghua University

Internships

- 2025.12 - Present: Research Intern, Siebel School of Computing and Data Science, University of Illinois Urbana-Champaign.

- 2025.09 - 2025.10: Research Intern, i-Vision Group, Tsinghua University.

- 2025.05 - 2025.08: Research Intern, Guangming Lab (Shenzhen), Shenzhen.

- 2024.07 - 2025.02: Research Intern, GenSi Lab, Tsinghua University.

- 2024.02 - 2024.06: Research Intern, ATOM Lab, Tsinghua University.

Services

- NeurIPS 2026, Reviewer

- ICML 2026 Workshop AI4Math, Reviewer

Blogs

If you’d like to follow more of my ideas and updates, feel free to visit my Zhihu for longer reflections, my Rednote for lighter day-to-day sharing, and my local blog page for organized posts and archives.